Project Maven has emerged as one of the most transformative and controversial chapters in modern military history. What began as an experiment in 2017 has, by 2026, become an indispensable pillar of the global defense architecture. This program is not only changing the "speed of war" but is also fundamentally redefining the process of human decision-making and the very ethics of combat. For candidates of the UPSC and other competitive examinations, the study of Project Maven is not merely a technical subject; it bridges critical aspects of General Studies Paper-2 (International Relations) and Paper-3 (Science & Technology and Internal Security). This report presents a comprehensive analysis of Algorithmic Warfare, its global consequences, and India’s indigenous response.

The New Era of Algorithmic Warfare: Introduction and Background

The nature of warfare has always been dictated by available technology, but the advent of Artificial Intelligence (AI) has pushed the "Speed of War" to a level where the processing capacity of the human brain is being left behind. Algorithmic Warfare refers to the use of data, machine learning, and computer vision in military operations to automate the process of identifying and engaging the enemy.

Project Maven, officially known as the 'Algorithmic Warfare Cross-Functional Team' (AWCFT), is the epicenter of this revolution. It was established in April 2017 by the U.S. Department of Defense (DoD) to solve a crisis in intelligence analysis. At that time, the U.S. military possessed thousands of hours of drone footage and satellite imagery but faced a massive shortage of human analysts to process it. Maven’s primary objective was to make this 'data overload' manageable through machine learning.

Between 2017 and 2026, Maven evolved from a limited experiment into a 'Program of Record.' This means it is now a permanent part of the U.S. defense budget, secured with long-term funding and widespread deployment. This development demonstrates that global military powers now view AI not as an auxiliary tool, but as an essential infrastructure.

The Evolution of Project Maven: 2017 to 2026

The journey of Project Maven has been a complex blend of technical innovation and ethical controversy. Understanding its key milestones is crucial for UPSC aspirants as it illustrates how a government initiative, in collaboration with the private sector, achieves global impact.

Key Milestones and Developments

| Year | Significant Event | Impact/Result |

|---|---|---|

| 2017 | Establishment of Project Maven | Formation of the 'Algorithmic Warfare Cross-Functional Team.' |

| 2018 | Google's Protest and Exit | Google withdrew from the contract following internal employee protests. |

| 2020 | Entry of Palantir | Palantir became the lead technical contractor and developed the 'Maven Smart System.' |

| 2023 | Transfer to NGA | The program was declared a formal 'Program of Record' under the National Geospatial-Intelligence Agency. |

| 2025 | $10 Billion Contract | A major agreement between the U.S. Army and Palantir for data integration. |

| 2026 | Department-wide Expansion | Directives issued to deploy the Maven Smart System across the entire U.S. DoD. |

Project Maven’s initial focus was on analyzing drone video in the fight against ISIS. However, by 2026, its capabilities are no longer limited to object identification; it has become the primary means of compressing the "Kill Chain." The "Kill Chain" refers to the time taken from identifying a target to neutralizing it. With Maven, this time has been reduced from hours to mere seconds.

Technical Architecture of the Maven Smart System (MSS)

The Maven Smart System (MSS) is the practical manifestation of Project Maven deployed on the battlefield. It operates on a Software-as-a-Service (SaaS) model, primarily developed by Palantir Technologies. Its technical prowess lies in its ability for data integration and real-time analysis.

1. Data Fusion and Visualization

The standout feature of MSS is 'Data Fusion.' It ingests data from diverse military sources—radar, video feeds, satellite imagery, weather data, and live troop locations—and presents them on a single interface. This provides commanders with a "live, synchronized view" of the theater of war. Analysts describe it as providing "transparent vision in dusty battlefields."

2. Computer Vision and Machine Learning

Maven’s algorithms utilize 'Convolutional Neural Networks' (CNN) to scan millions of gigabytes of data. Its capabilities are unparalleled in:

Automated Identification: It can mark enemy vehicles, weapons, and command centers in dense forests, mountains, or crowded urban areas.

Pattern Recognition: It predicts threats by analyzing suspicious activities and "Life Patterns."

Processing Speed: Maven can analyze over 1 million hours of drone footage in a single day, scanning visual data 80% faster than human capability.

3. Decision Support and Recommendations

In its modern iterations, Maven does not just identify a target; it suggests the most precise time for an attack and the available weaponry to use. It assists commanders in analyzing potential outcomes of different scenarios, making the decision-making process faster than the "Speed of Thought."

Private Sector and Military Collaboration: The Google vs. Palantir Controversy

The trajectory of Project Maven has exposed a deep ethical divide between Silicon Valley tech giants and the defense establishment. This serves as a vital case study for UPSC students regarding the 'Ethics of Science.'

Google’s Ethical Stand and Internal Revolt: In 2018, when it was revealed that Google was providing image recognition technology to the Pentagon, over 3,000 employees signed an open letter demanding a withdrawal. They argued that AI should not be used for "target killing" or direct warfare. Consequently, Google did not renew the contract and published its 'AI Principles,' which restricted the development of AI for weapons.

The Rise of Palantir and Anduril: Following Google's exit, companies like Palantir and Anduril filled the vacuum. Palantir CEO Alex Karp has argued that Western nations must possess capabilities that the rest of the world does not, and that shortening the "Kill Chain" through AI is a strategic necessity.

The 2026 Landscape: By 2026, the fault lines have shifted again. Companies like Anthropic, whose 'Claude' model was being used in Maven, demanded restrictions on tracking citizens and fully autonomous strikes, leading to a severance of ties with the military. Now, the Pentagon is looking toward new partners like OpenAI and xAI, who are potentially open to more "flexible" contracts.

Battlefield Applications: Ukraine, Gaza, and Operation Epic Fury

The theoretical benefits of Project Maven have been tested in the deadliest conflicts of recent years. Its performance has proved its effectiveness but also raised grave humanitarian concerns.

Ukraine: The AI 'Laboratory'

U.S. military officials have described the Ukraine-Russia conflict as the 'Laboratory' for Project Maven. Here, Maven was used to transform intelligence about enemy movements into an accessible digital platform, helping Ukrainian commanders make better strategic decisions based on real-time data.

Gaza: AI as the Primary Targeting Mechanism

Israel’s military operations in Gaza presented an early example of using AI as a primary targeting mechanism. Systems like 'The Gospel' were used to select physical targets (buildings and infrastructure), while AI databases like 'Lavender' identified 37,000 potential human targets at one time. While this increased the speed of war, it came at the cost of high civilian casualties.

Operation Epic Fury (2026): Conflict with Iran

The 2026 conflict with Iran has become the first full-scale field test of an AI-integrated military machine. According to U.S. Central Command (CENTCOM), over 13,000 targets were struck under this operation, with 1,000 strikes occurring on the first day alone. MSS simplified the targeting workflow to the point where decision-making for commanders became a digital task akin to "left-click, right-click."

Operation Details (April 2026)

Operation Name: Operation Epic Fury

Strikes in first 24 hours: 1,000+ targets

Total Strikes (by Week 5): 13,000+

U.S. Military Casualties: 13 KIA, 381 Wounded (as of April 2026)

Lethal Autonomous Weapons Systems (LAWS) and the Threat of 'Killer Robots'

The most controversial aspect of Project Maven is its tilt toward autonomy. Human rights organizations and the UN have warned that this technology marks the beginning of the era of "Killer Robots," where life-and-death decisions are made by machines.

Ethical and Legal Concerns

Lack of Accountability: If an algorithm misidentifies an innocent civilian as a terrorist and attacks, who is responsible? A machine cannot be held accountable, and as human control diminishes, the responsibility of commanders becomes blurred.

Lack of Contextual Understanding: Machines cannot comprehend the complex human emotions, cultural contexts, and the test of "Proportionality" on a battlefield—which is the core of International Humanitarian Law (IHL).

Digital Dehumanization: AI reduces humans to 'data points,' making the use of violence psychologically easier for the operator.

The UN’s Role and the 2026 Deadline

UN Secretary-General António Guterres has described autonomous weapons as "politically unacceptable and morally repugnant." He has called on member states to conclude a legally binding treaty by 2026 to prohibit autonomous weapons that function without human control. However, countries like the US, Russia, and Israel are opposing highly restrictive language, complicating this global governance effort.

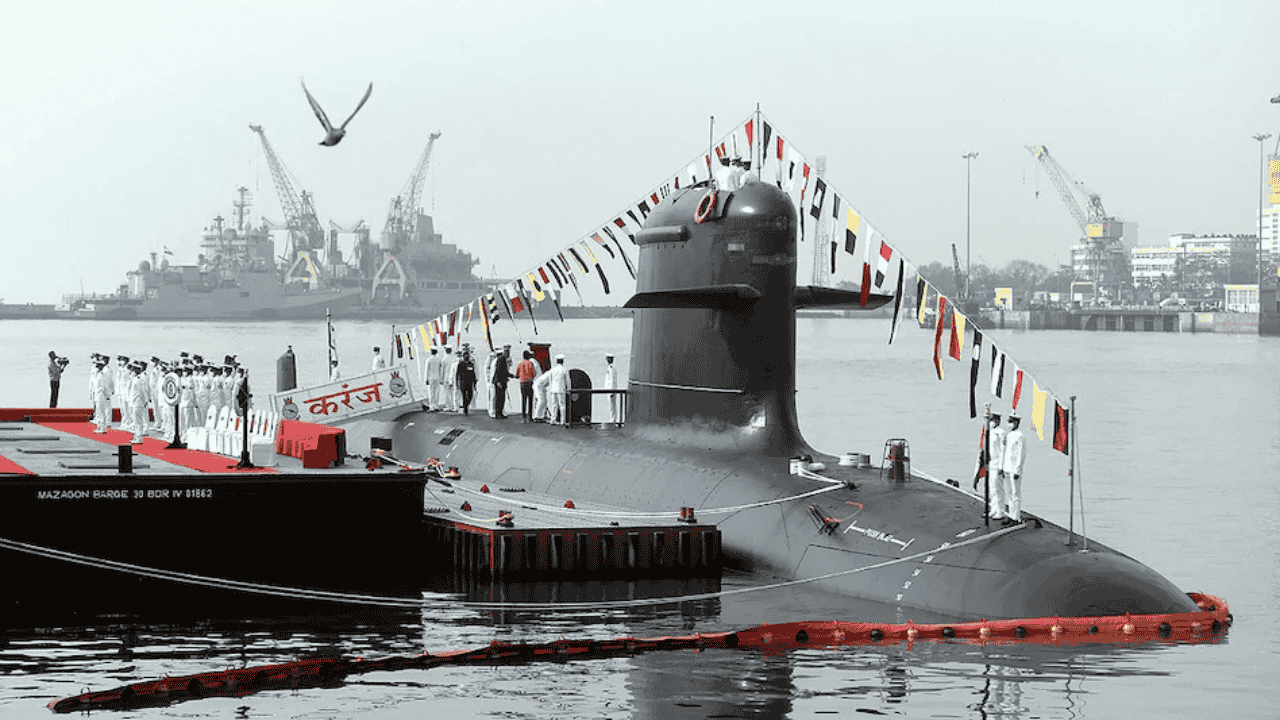

India’s Security and AI: 'Prajna' and Indigenous Solutions

For India, the development of technologies like Project Maven presents a dual challenge: the need to counter the AI capabilities of adversaries (especially China) while ensuring Technological Sovereignty.

Prajna: India’s Own AI-Powered Surveillance Mechanism

In April 2026, India introduced an indigenous AI-enabled satellite imaging system called 'Prajna' to strengthen its security framework. Developed by the Center for Artificial Intelligence and Robotics (CAIR) under DRDO, this system is a major step for India's internal security and counter-terrorism operations.

Key Features of Prajna:

Real-time Intelligence: Uses AI algorithms to process high-resolution satellite imagery for real-time monitoring of sensitive areas.

Pattern and Anomaly Detection: Helps identify suspicious activities and infiltration routes along the borders.

Indigenous Innovation: Part of the 'Atmanirbhar Bharat' mission, aimed at reducing the risk of foreign AI stacks and 'Kill Switches.'

India’s Comprehensive AI Defense Agenda (2026)

| Initiative | Description/Objective | Status (2026) |

|---|---|---|

| Prajna | AI-enabled satellite imaging system | Handed over to the Ministry of Home Affairs. |

| Swarm Drones | AI-powered drone swarms for LAC surveillance | Integrated by the Indian Army. |

| Cybergard AI | AI-powered SOCs to protect the power grid | Deployed nationally. |

| IndiaAI Mission | Sovereign compute and innovation | 38,000+ GPUs onboard. |

Analysis for UPSC Mains: GS-III and Internal Security

For UPSC aspirants, the significance of Project Maven lies more in its broad security implications than its technical details.

Strategic Implications of Algorithmic Warfare

Shift in Power Balance: AI can reduce traditional military advantages. Cheap autonomous Swarm Drones can overwhelm multi-billion dollar air defense systems—a phenomenon known as "Economic Asymmetry."

Decision Compression: The speed of war has become so fast that human legal reviews often become mere 'rubber stamps.'

Cognitive Warfare: Deepfakes and AI-driven misinformation campaigns are being used to destabilize democracies and break social cohesion.

Challenges for India

Foreign Dependency: Using foreign models (U.S. or Chinese) makes security agencies vulnerable to 'backdoors' and surveillance.

Black Box Problem: It is difficult to explain AI decision-making processes, making it challenging to distinguish between a software bug and a cyberattack during war.

Data Security: Protecting the massive datasets required for AI systems from cyber espionage is a Herculean task.

The Way Forward: Technological Sovereignty and Global Norms

Systems like Project Maven and Prajna are just the beginning. Future wars will be fought by algorithms, but winning them will still require human wisdom and ethics. To ensure its technological sovereignty, India must:

Indigenous Research: Develop native AI models through CAIR and deep-tech startups.

Global Partnerships: Co-develop sensitive technologies through collaborations like the India-US iCET (Initiative on Critical and Emerging Technology).

Ethical Guidelines: Establish clear 'Red Lines' for the use of autonomous weapons and norms for human control.

Why this matters for your exam preparation

GS Paper III (Internal Security & S&T): Project Maven falls under "Role of communication networks in internal security" and "Basics of cyber security." Indigenous systems like Prajna are direct examples of 'Atmanirbhar Bharat' and defense indigenization.

GS Paper II (International Relations): Global governance on AI (UN CCW) and U.S.-China tech rivalry are major IR themes.

Ethics (GS Paper IV): The issue of "Killer Robots" raises serious questions about the ethics of science and human responsibility in life-death decisions, perfect for Ethics case studies.

Aspirants are advised to include the data from this report (e.g., the statistics from Operation Epic Fury) and Indian initiatives (Prajna and IndiaAI Mission) in their answers to produce contemporary and data-driven responses. Stay updated with such in-depth analyses at Atharva Exam Wise.